AnythingLLM chose LanceDB as their vector database backbone to create a frictionless experience for developers and end-users alike. By leveraging LanceDB's serverless, setup-free architecture, the AnythingLLM team slashed engineering time previously spent on troubleshooting infrastructure issues and redirected it toward building innovative features. The result? An application that works seamlessly across all platforms with zero configuration or setup, empowering users to quickly deploy document chat and agentic workflows while maintaining complete data privacy and control.

Introduction

In an AI landscape crowded with fragmented open-source tools requiring significant technical expertise, AnythingLLM provides a solution that simplifies the deployment of powerful LLM applications. It provides a standard interface that allows users to chat with their documents and create agentic workflows to improve productivity—all while maintaining privacy through offline, local setup.

AnythingLLM has seamlessly integrated LanceDB as their vector database of choice for all applications involving context retrieval for document chat and agentic workflows. By choosing LanceDB's open-source, serverless, and setup-free architecture, AnythingLLM delivers a smooth user experience across all platforms, including Windows, which has traditionally been a pain point for many vector database solutions. Moreover, LanceDB is the only embedded vectorDB option in the node ecosystem.

Challenge

Making AI Accessible and Private

Despite the growing power of open-source LLMs and frameworks, two significant challenges stood in the way of wider adoption:

- Technical Fragmentation and Setup Complexity

- Open-source AI tools, while powerful, are fragmented and require significant technical effort to configure correctly

- Cross-platform compatibility issues lead to frustrating experiences, particularly on Windows

- Vector Database Infrastructure Hurdles

- Any useful contextual chat or agentic workflow depends on efficient vector storage and retrieval

- Most vector databases require separate infrastructure setup and maintenance

- Setup instructions vary across platforms, consuming significant engineering time to solve user issues

- Infrastructure challenges lead to user abandonment before experiencing the actual value of the application

AnythingLLM needed a solution that would eliminate vector database configuration headaches while delivering high performance across all operating systems and hardware configurations.

Solution

LanceDB: The Zero-Configuration Vector Database Backbone

To overcome these challenges, AnythingLLM integrated LanceDB as their default vector database, providing users with a truly hassle-free experience. The decision was strategic—LanceDB's architecture aligned perfectly with AnythingLLM's vision for simplicity and privacy.

LanceDB delivers critical advantages that support AnythingLLM's mission:

- Serverless and Setup-Free: Removes all friction from the setup stage, allowing users to get started immediately.

- Cross-Platform Compatibility: Works seamlessly across all platforms including Windows ARM, enabling full functionality on CoPilot AI PCs

"With support for Windows ARM, LanceDB is the only VectorDB with seamless experience across platforms and able to run fully on CoPilot AI PCs - something no other vector databases can do at this time. This only affirmed our choice that LanceDB is the best VectorDB provider for on-device AI with AnythingLLM."

- Founder CEO, Timothy Carambat @ AnythingLLM, Mintplex Labs

- Incredible Retrieval Speed: Provides fast context retrieval while being persisted on disk, scaling to significant workloads locally without memory limitations

- Native Multimodal Support: Well-suited for VLM-based applications with advanced capability to store and retrieve various data types

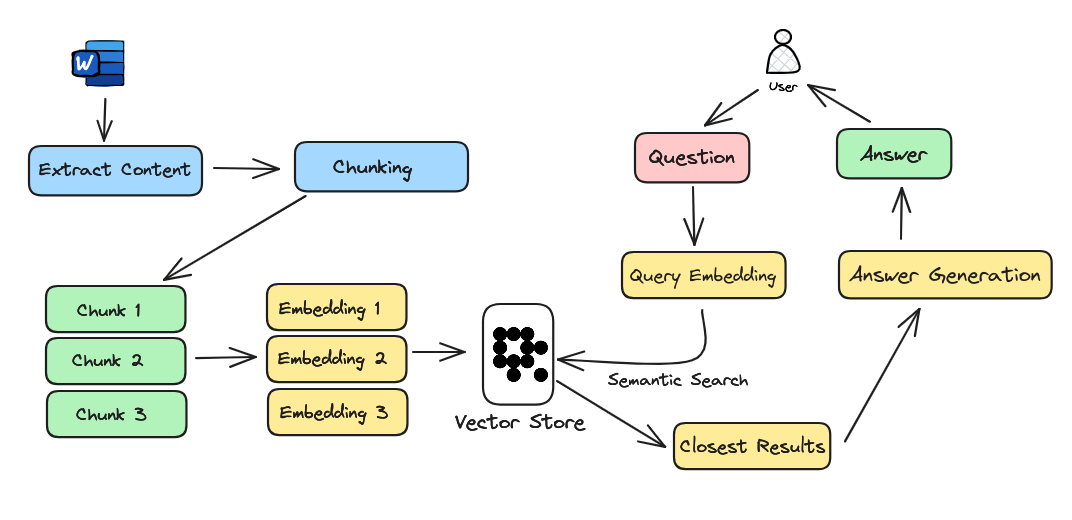

AnythingLLM leverages LanceDB for both its core RAG (Retrieval Augmented Generation) architecture and its agentic workflows:

- RAG Implementation: Documents are broken down into smaller chunks, embedded, and stored in LanceDB. These are retrieved as contexts based on user queries to assist LLMs in generating final responses.

- Agentic Flows: Unlike RAG, AI agents in AnythingLLM can take actions on APIs or local devices. The memory component of these agents relies on LanceDB's vector store for efficient information retrieval.

Results

Transformative Impact on User Experience and Engineering Efficiency

By integrating LanceDB as their vector database, AnythingLLM has achieved remarkable improvements:

End-User Benefits

"I can't even begin to describe how much time LanceDB saves us. Nearly 100% of users use our LanceDB VectorDB database as it seamlessly operates in the background managing their vectors for RAG and agents. It is blazing fast on even the lowest end hardware we target."

- Founder CEO, Timothy Carambat @ AnythingLLM, Mintplex Labs

- Zero Configuration: Users can get started immediately without any vector database setup

- Enhanced Cross-Platform Experience: Seamless operation across all platforms, including Windows ARM and CoPilot AI PCs

- Improved Performance: Blazing fast retrieval even on low-end hardware

- Complete Data Privacy: Fully local operation with no data leaving the user's device

Engineering Productivity

"Relying on LanceDB allows us to focus on building the applications and not spend any engineering or debugging time on one of the most critical pieces of infra, the vectorDB - even at millions of vectors."

- Founder CEO, Timothy Carambat @ AnythingLLM, Mintplex Labs

- Redirected Engineering Focus: Freed from solving infrastructure issues, the team can concentrate on core feature development

- Reduced Support Load: Significantly fewer user issues related to vector database setup

- Accelerated Development Cycle: More time spent on product roadmap rather than troubleshooting

- Scalability Without Concerns: LanceDB handles millions of vectors efficiently without additional engineering effort